AI-Generated Clinical Notes and PHI: The Privacy Risk Healthcare Leaders Are Ignoring

By: Christopher Perry, CEO — PrettyFluid Technologies, Inc.

In the race to adopt AI-powered documentation tools, healthcare organizations are moving fast. Clinicians are dictating notes, AI transcribes them, and the EHR gets updated in seconds. The efficiency gains are real. So is the exposure.

What most organizations have yet to internalize is that AI-generated clinical notes are, at their core, a new PHI pipeline — one that routes protected health information through third-party infrastructure that was never designed with HIPAA as a primary constraint.

The Hidden Data Flow

When a clinician dictates a patient encounter, that audio is transmitted to an AI service. The transcript is returned. The note is generated. At every step, PHI is in motion — and in many deployments, it is transiting infrastructure that the covered entity does not control, cannot audit, and has inadequately evaluated under HIPAA’s minimum necessary standard.

The Business Associate Agreement is only one component of a complete compliance posture. A BAA establishes legal accountability, but it fails to encrypt data in transit, falls short of guaranteeing data residency, and remains vulnerable to AI vendor use of de-identified training data derived from your patients’ notes. The contractual protections and the technical protections are different conversations, and in 2026, too few organizations are having the technical one.

What the HIPAA Security Rule Actually Requires

The HIPAA Security Rule, now in its final updated form following the HHS rulemaking completed in late 2025, requires covered entities to conduct thorough risk analyses before deploying new technology that touches ePHI. AI clinical note generation tools are subject to these requirements. The risk analysis must account for the full data lifecycle — from audio capture to final storage — including every system the data touches along the way.

If your organization deployed an AI documentation tool in the last two years without updating your risk analysis, you are out of compliance — regardless of whether the vendor has a BAA on file.

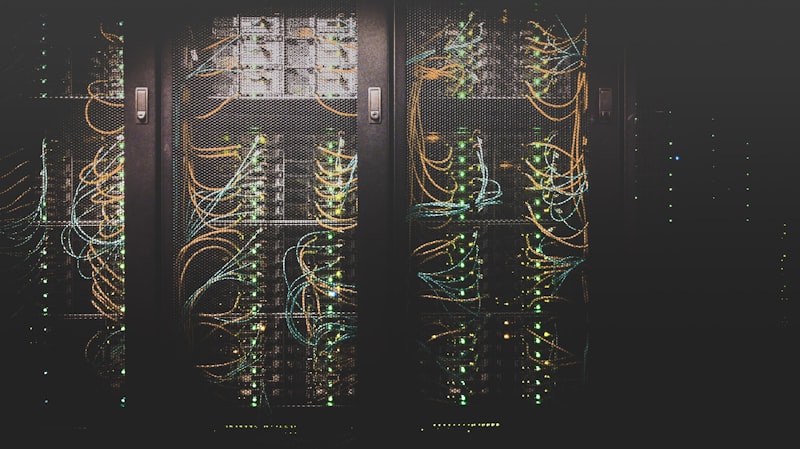

The Architecture Problem

Most AI clinical documentation tools are cloud-native SaaS products. The PHI resides on their servers, processed by their models, governed by their retention policies. Some offer zero-retention options. Many do not. Even those that do require the covered entity to verify, rather than simply trust, that deletion is actually occurring.

The alternative — one that we believe represents the correct long-term direction for the industry — is a zero-knowledge architecture where PHI is encrypted before it leaves the covered entity’s control, and the AI vendor processes only ciphertext. This approach is technically more complex and today represents the frontier rather than the baseline. But the regulatory and reputational pressure building around AI and PHI makes it the direction that forward-thinking organizations should be evaluating now, rather than after an incident.

Questions Every Healthcare CIO Should Be Asking

- Where does PHI reside during AI processing, and in which geographic region?

- Does our BAA with this vendor cover AI model training as well as inference?

- Has our risk analysis been updated since we deployed this tool?

- Can we verify that audio recordings are deleted after transcription?

- What happens to our data if the AI vendor is acquired or goes bankrupt?

These are the exact questions OCR will ask in the event of a breach — and the answers will determine whether a penalty follows.

The efficiency of AI-generated clinical documentation is worth capturing. The privacy risk it introduces is worth taking seriously. In 2026, organizations that treat these as separate conversations will find that the second one has a way of catching up with the first.