In 2024, the European Union’s Artificial Intelligence Act entered into force — the world’s first comprehensive legal framework for regulating AI systems. Like GDPR before it, the AI Act is expected to become a de facto global standard, shaping how AI is developed and deployed well beyond Europe’s borders.

The Act takes a risk-based approach. AI systems are classified as unacceptable risk (banned outright), high risk (subject to strict requirements before deployment), limited risk (transparency obligations), or minimal risk (largely unregulated). High-risk categories include AI used in healthcare, employment, education, critical infrastructure, and law enforcement.

For organizations deploying AI in healthcare — clinical decision support, diagnostic tools, patient risk stratification — the implications are significant. High-risk AI systems must maintain detailed technical documentation, undergo conformity assessments, register in an EU database, and demonstrate human oversight mechanisms.

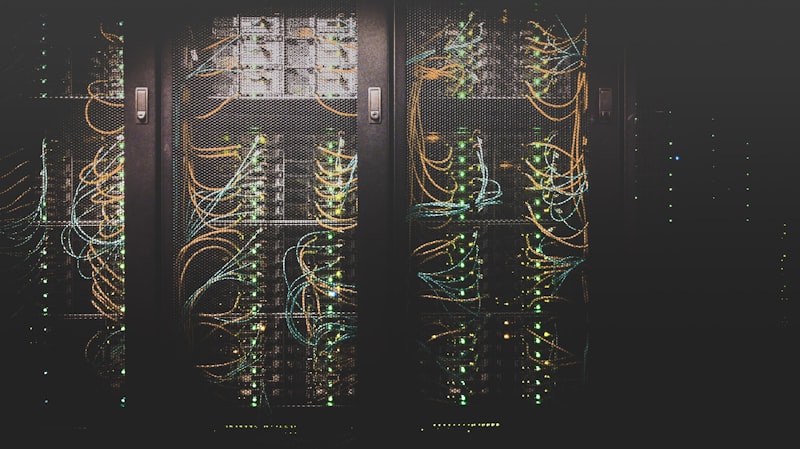

The data governance requirements are particularly relevant. High-risk AI systems must be trained and validated on data that is relevant, representative, and free from errors to the extent possible. This creates a direct link between AI compliance and the quality of underlying data infrastructure.

For companies building products that will touch European users or European health data, the AI Act is not a future concern. Prohibited practices became enforceable in early 2025. High-risk system requirements follow on a rolling timeline through 2026. The time to plan was yesterday. The next best time is now.